Tese de Doutorado de Lucas Nunes Alegre

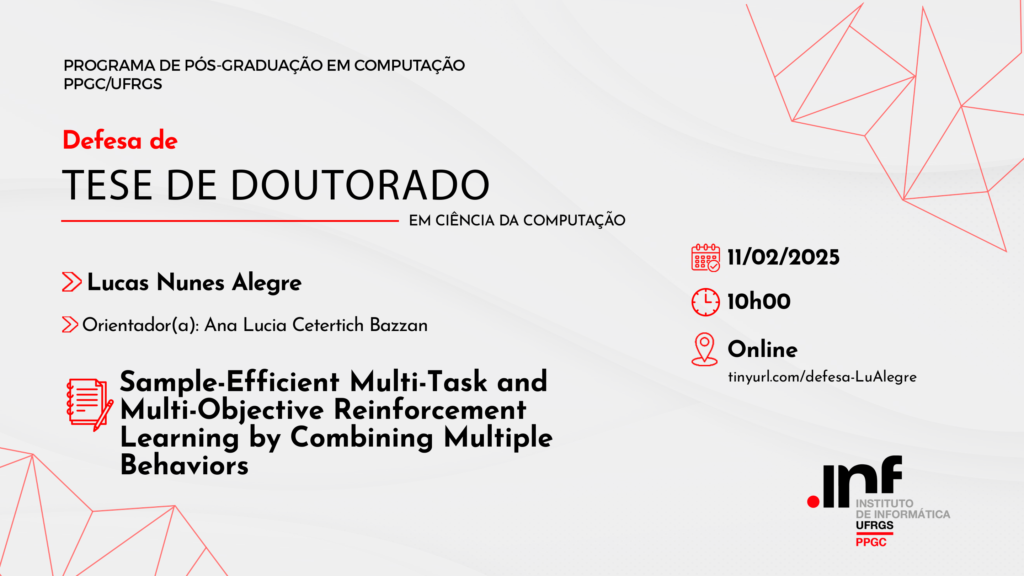

Defesa de Tese de Doutorado

Aluno(a): Lucas Nunes Alegre

Orientador(a): Ana Lucia Cetertich Bazzan

Título: Sample-Efficient Multi-Task and Multi-Objective Reinforcement Learning by Combining Multiple Behaviors

Linha de Pesquisa: Aprendizado de Máquina, Representação de Conhecimento e Raciocínio

Data: 11/02/2025

Hora: 10:00

Local: Esta banca ocorrerá de forma remota. Acesso público disponibilizado pelo link https://meet.google.com/xzq-firu-xfq.

Banca Examinadora:

-Anderson Rocha Tavares (Universidade Federal do Rio Grande do Sul)

-Fredrik Heintz (Linköping University)

-Patrick Mannion (University of Galway)

Presidente da Banca: Ana Lucia Cetertich Bazzan

Resumo: When solving sequential decision-making problems, humans exhibit different behaviors depending on the problem (or task) they are tasked with solving at a given moment. One of the main challenges in the field of artificial intelligence, and reinforcement learning (RL) in particular, is the development of generalist and flexible agents that are capable of solving multiple tasks—each requiring the agent to learn a potentially new, specialized behavior. Importantly, tackling this challenge requires agents to learn behaviors that may involve optimizing a single objective, or trading off between multiple conflicting objectives. We argue that many important real-world tasks are naturally defined by multiple objectives, which when prioritized differently may require the agent to adapt its behavior accordingly. In this thesis, we study the problem of how to design flexible RL agents that can, in a sample-efficient manner, adapt their behavi or to solve any given task—each of which is possibly defined by multiple conflicting objectives. The main hypothesis of this thesis is that it is possible to meaningfully combine insights from two apparently disparate sub-fields of machine learning—multi-objective RL and multi-task RL—to design novel and principled techniques to address the problem discussed above. In particular, such insights arise from the fact that both of these fields typically deal with problems where an agent needs to learn multiple behaviors/policies. We introduce new multi-policy methods that empower RL agents to (i) carefully learn multiple behaviors, each specialized in a different task or in tasks in which an agent assigns different priorities or preferences for each of the underlying objectives that it needs to achieve; and (ii) combine previously-acquired behaviors to efficiently identify solutions to new tasks. The methods we investigate have important theoretical guarantees regarding the optima lity of the set of behaviors they identify, and their capability of solving new tasks in a zero-shot manner, even in the presence of function approximation errors. We evaluate the proposed methods in challenging multi-task and multi-objective RL problems, and we show that the algorithms we introduce in this work outperform various current state-of-the-art algorithms in domains with both discrete and continuous state and action spaces.

Palavras-Chave: Reinforcement learning; Multi-objective RL; Multi-task RL; Transfer learning; Model-based RL; Machine learning

PPGC – Programa de Pós-Graduação em Computação

Instituto de Informática – UFRGS

www.inf.ufrgs.br/ppgc